Workshop Exercises

This workshop demonstrates how to build an agentic testing workflow that can analyze GitHub pull requests and gather data from external sources such as user stories from a scrum board, to generate and execute test plans fully autonomously.

Workflow Diagram

What We're Testing

We'll work with two key applications to demonstrate the complete testing workflow:

Demo Webshop

A simple fruit shop with basic browsing and shopping basket functionality.

https://demo-webshop.vercel.app/

You'll create a user story on the scrum board, implement the feature, and watch as the AI agents automatically generate and execute tests when you create a pull request.

How Does It Work

The workflow combines AI agents with browser automation to analyze code changes and execute tests autonomously.

Agents

The Testplan Agent analyzes GitHub pull requests and generates browser-executable test cases. Agents can use tools to interact with external systems and data sources.

export const testplanAgent = new Agent({

name: "Test Plan Agent",

model: openai("gpt-5-mini"),

tools: {

getPullRequestDiff,

getPullRequestComments,

getPullRequest,

getScrumIssue,

},

instructions: `...`

});System instructions are crucial for guiding agent behavior. In the instructions, you can use capital letters to emphasize critical constraints. Clear instructions for tools are essential, specifying when and how to use each tool effectively.

Tools

A tool is just a function that the agent can call. Here's a simple example:

export const getPullRequest = createTool({

id: "get-pull-request",

inputSchema: z.object({

pullRequestUrl: z.string(),

}),

description: `Fetches a GitHub pull request by URL.`,

execute: async ({ context: { pullRequestUrl } }) => {

const response = await githubClient.get(apiUrl);

return {

number: data.number,

title: data.title,

state: data.state,

};

},

});For simplicity, we use an external agent via Browser Use to execute test cases. This browser agent will have its own tools and work in a similar way to the test plan agent, but it is not defined in this workshop repository. The workflow sends test cases to Browser Use, which runs them in a real browser environment and reports results back.

You can view live runs on the Browser Use dashboard.

Trigger Workflow

The workflow is triggered via GitHub Actions on pull request events. By hosting our Mastra setup, we expose REST endpoints to trigger workflows, interact with agents, and access tools.

The GitHub Actions workflow performs two key operations:

- Create Run: Initializes a new workflow run by calling the create-run endpoint

- Start Async: Starts the workflow execution asynchronously with the run ID and pull request URL

This pattern allows the workflow to run asynchronously without blocking the GitHub Actions runner, enabling long-running AI operations.

How It's Working

The workflow orchestrates the test plan agent and browser agent together. It chains steps sequentially:

- Generate test plan

- Post test plan comment

- Map to check if testing is needed

- Wait for preview environment

- Execute tests

- Post test report

export const prWorkflow = createWorkflow({

id: "pr-workflow",

inputSchema: z.object({

pullRequestUrl: z.string(),

}),

outputSchema: genericOutputSchema,

})

.then(generateTestPlanStep)

.then(githubTestPlanCommentStep)

.map(async ({ inputData }) => {

return { success: inputData.success };

})

.then(waitForPreviewEnvironmentStep)

.then(executeTestsStep)

.then(githubTestReportStep)

.commit();Schemas are crucial for ensuring agents produce structured, predictable output. Using Zod schemas, we can enforce a specific data structure that agents must follow, which is essential for reliable agent behavior and seamless data flow between workflow steps.

When an agent generates output, it must conform to the defined schema. This prevents unexpected formats, ensures type safety, and allows downstream steps to confidently access the data they need.

export const testPlanOutputSchema = z.object({

needsTesting: z.boolean(),

testCases: z.array(

z.object({

title: z.string(),

description: z.string(),

})

),

});Workshop Exercises

Follow these steps to build and test your AI-powered testing workflow.

- Create a user story on the scrumboard OR use an existing one

- Go to: https://scrum-board-navy.vercel.app/

- Note down the issue number you want to use

-

Open the

demo-webshoprepository in your code editor - Create a new branch:

git checkout -b feature/your-feature-name- Vibe code a new feature based on user story in scrumboard

- Commit your changes with the issue number:

git add .

git commit -m "Add your feature description #10"- Push your branch:

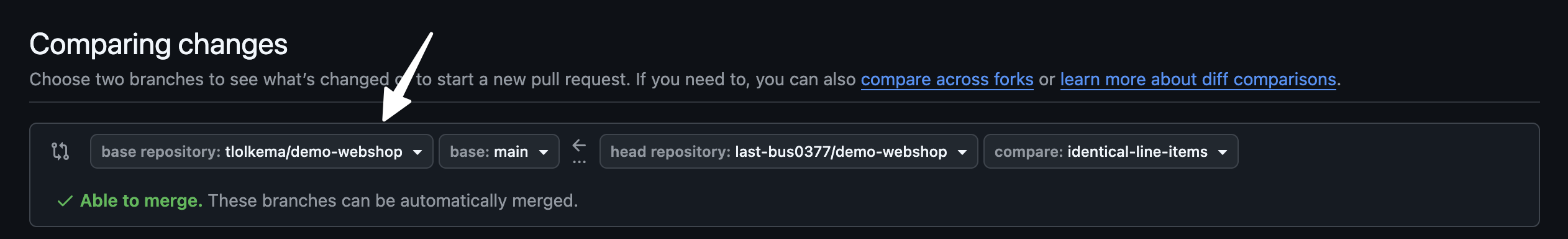

git push origin feature/your-feature-name- Create a pull request on GitHub

Important: Make sure to change the base repository for this pull request to your fork

Start the Local Playground

In the ai-mastra-agent-workshop repository:

npm run devOpen the playground URL shown in your terminal (e.g., http://localhost:4116/)

Test the Agents

- Go to the Agents tab

- Select the Test Plan Agent

- Supply your pull request URL and generate a test plan

- Explore the available tools and how the agent uses them

Run the Full Workflow

- Go to the Workflows tab

- Select the PR Workflow

- Supply your pull request URL

- Click "Run" and watch the workflow execute

Monitor Browser Use Sessions

- While the workflow runs, inspect the live browser sessions

- Go to: https://cloud.browser-use.com/sessions

- Watch the AI agent navigate and test your application

Useful Resources

If you're interested in learning more about AI agents:

Now that you've run the workflow locally, take it to the next level by integrating it into a full CI/CD pipeline that runs automatically on every pull request.